The financial industry generates trillions of transactions per year. Rule-based fraud engines, static KYC pipelines, and siloed security tools were never designed for the velocity, variety, and adversarial creativity of modern threat actors. Agentic AI changes the equation.

Agentic AI in fintech refers to autonomous systems that perceive data, plan multi-step actions, execute tasks across systems, and self-improve — all while maintaining full audit trails for compliance. Unlike copilot tools that surface suggestions for humans to act on, agents close the loop entirely: flag → investigate → report → block, without waiting for a human in the middle.

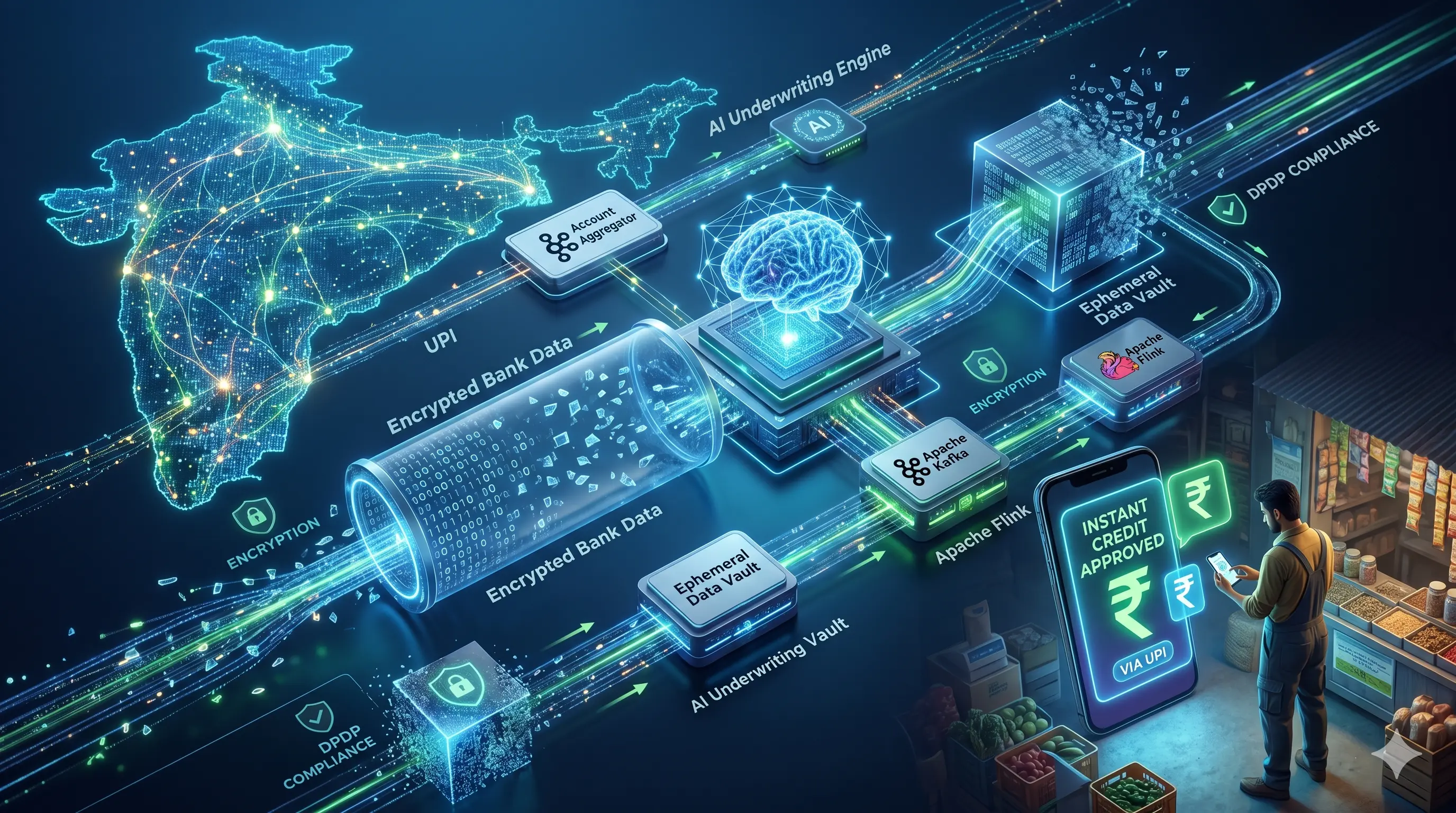

This post walks through a four-phase AWS architecture for deploying adaptive real-time intelligence across fraud detection, AML/KYC, and cybersecurity workloads — with concrete service choices, agent orchestration patterns, and the safeguards regulators require.

Key Statistics

| Metric | Value |

|---|---|

| Projected global fraud losses | $10.5 trillion |

| False positive reduction vs rule-based systems | Up to 90% |

| Fraud reduction via Stripe Radar | 38% |

What “Agentic” Actually Means

Most financial institutions still run fraud detection as a scoring pipeline: ingest → feature engineer → model score → rule threshold → alert. The alert goes to a queue. An analyst opens a ticket. Hours pass. Funds have moved.

Agentic AI replaces that queue with an autonomous investigator. The agent perceives a risk signal, decides what to investigate, pulls transaction history, screens sanctions databases, cross-references external threat feeds, escalates or resolves the case, and files a Suspicious Activity Report — all in real time and without a human queue in between.

Key distinction: A copilot surfaces information. An agent acts on it. The shift from copilot to agent is what compresses case resolution from hours to milliseconds and enables true end-to-end automation of AML/KYC workflows.

Platforms like Featurespace ARIC and Stripe Radar already operate at this level — processing trillions in volume and adapting continuously to new fraud patterns, including AI-generated synthetic identities and behavioral mimicry attacks that evade traditional models.

The Four-Phase AWS Architecture

Deploying adaptive real-time intelligence at financial scale requires moving through four architectural phases. Each phase builds on the last and corresponds to increasing autonomy, observability, and regulatory coverage.

Phase 1 — Signal Ingestion & Feature Engineering

Stream transaction events, user behavior, device telemetry, and merchant data into a unified feature store with sub-second latency.

AWS Services: Amazon MSK (Kafka), Amazon Kinesis, SageMaker Feature Store

Phase 2 — Real-Time Scoring & Anomaly Detection

Run ensemble ML models online — combining supervised classifiers, graph neural networks, and behavioral baselines — to produce risk scores with explainability outputs.

AWS Services: Amazon Fraud Detector, Amazon Neptune ML, SageMaker Endpoints

Phase 3 — Agentic Orchestration & Case Automation

Deploy autonomous agents that investigate flagged events, orchestrate tool calls across internal and external systems, and execute resolution actions within defined authority.

AWS Services: AWS Step Functions, AWS Lambda, Amazon Bedrock Agents

Phase 4 — Compliance, Audit & Continuous Improvement

Log every agent action, generate explainable outputs for ECOA/FCRA, feed resolved cases back into the training pipeline, and maintain SOC 2 / EU AI Act compliance posture.

AWS Services: Amazon S3 + Athena, Amazon QuickSight, Amazon CloudWatch

Phase 1: Signal Ingestion & Feature Engineering

Streaming at transaction speed

Fraud doesn’t wait. Agents need features computed and available within milliseconds of a transaction hitting the wire. On AWS, Amazon MSK (Managed Streaming for Apache Kafka) ingests raw transaction events at scale — producers write transaction records to an input topic, and a PyFlink job on Amazon Kinesis Data Analytics processes each event in real time, applying velocity checks, device fingerprinting, and behavioral baseline lookups before writing enriched records to an output topic.

The enriched features land in Amazon SageMaker Feature Store, which maintains both an online store (sub-millisecond reads for model inference) and an offline store (S3-backed, for training and batch analytics). This dual-store pattern is critical: agents querying account history during an investigation hit the online store; the retraining pipeline consumes the offline store.

Signal sources agents integrate

- Transaction velocity & amounts

- Device fingerprint & IP geolocation

- User behavioral biometrics

- Merchant category codes

- Sanctions & PEP watchlists

- Blockchain analytics

- External threat intelligence feeds

- Session anomaly signals

Integrating signals from all these sources — rather than scoring transactions in isolation — is how agentic systems reduce false positives by 70–90% compared to rule-based engines. A transaction that looks suspicious in isolation looks routine when the full behavioral context is present, and vice versa.

Phase 2: Real-Time Scoring & Anomaly Detection

Ensemble scoring with explainability

Amazon Fraud Detector serves as the primary scoring engine for transaction fraud, combining the Online Fraud Insights model — a supervised ML model trained on your labeled fraud data — with a rule engine that enforces business logic thresholds. The service scales automatically to 200+ fraud predictions per second and returns evaluations with minimal latency, enabling synchronous scoring in the payment flow without degrading user experience.

For AML and network-level fraud (collusion rings, synthetic identity networks), Amazon Neptune ML applies graph neural networks to the transaction graph. Neptune’s real-time inductive inference capability means new entities can be scored immediately against an existing GNN model without retraining — a critical requirement for live account onboarding and real-time wire screening.

For custom anomaly detection, Amazon SageMaker hosts two complementary model types: Random Cut Forest (RCF) for unsupervised anomaly detection, and XGBoost for supervised fraud classification. The ensemble combines both scores, allowing the system to catch known fraud patterns (XGBoost) and novel unknown patterns (RCF) simultaneously.

Explainability requirement: Regulators under ECOA and FCRA require that adverse decisions be explainable to the customer. Every model score must be accompanied by SHAP values or equivalent feature importance outputs — these are passed to the compliance layer and used by agents when generating human-readable case summaries.

Account Takeover Detection

For account takeover (ATO) fraud, AWS WAF Fraud Control provides device fingerprinting and bot detection at the perimeter, while Amazon Fraud Detector’s ATO model evaluates login events using the same scoring infrastructure. Session anomaly signals — unusual geolocation, new device, atypical typing cadence — flow through Kinesis into the same feature pipeline and are scored continuously during the session, not just at login.

Phase 3: Agentic Orchestration & Case Automation

The agent loop: flag → investigate → resolve → report

When Amazon Fraud Detector or Neptune ML flags a transaction above the risk threshold, it publishes an event to Amazon EventBridge. This triggers an AWS Step Functions state machine — the backbone of the agentic workflow. Step Functions orchestrates a sequence of Lambda functions (tools) that the agent can call: pull account history, query sanctions APIs, check the device reputation database, escalate to human review, block the transaction, or file a SAR draft.

Amazon Bedrock Agents provides the LLM-powered reasoning layer. Given the risk score, SHAP explanations, and account context, the Bedrock agent decides which tools to call in what order — a form of chain-of-thought planning grounded in real data. The agent operates within a defined authority framework: it can block transactions autonomously below a dollar threshold, but must escalate cases above that threshold or involving politically exposed persons (PEPs) for human review.

Agentic Fraud Investigation Loop

Risk Score Fired (EventBridge)

↓

Step Functions state machine activated

↓

Bedrock Agent — plans investigation steps

↓

Tool Calls via Lambda: History · Sanctions · Devices

↓

Decision: Block · Escalate · Clear

↓

SAR Draft + Audit Log → S3 · Athena · QuickSightFraud Detection: closing the loop automatically

Agents monitor billions of transactions, detecting behavioral anomalies such as unusual velocity patterns, geographic impossibilities, or merchant category mismatches. Crucially, they outperform copilot-style tools by completing the full investigative loop without waiting for human input. Lloyds Banking Group’s deployment of agentic fraud investigation has dramatically reduced case turnaround times — what previously required analyst hours now completes in seconds for the majority of cases, freeing human investigators for genuinely complex edge cases.

AML/KYC: automating the compliance workflow

For Anti-Money Laundering, agents automate the three core workflow components: transaction monitoring (Neptune ML graph scoring), screening (sanctions and PEP database lookups via Lambda), and SAR filing (Bedrock agent drafts the narrative, Step Functions routes for human sign-off before submission). By integrating with blockchain analytics APIs, agents can trace the full chain of custody for crypto-related transactions in real time — a capability that previously required specialized human analysts.

For KYC onboarding, agents verify identity documents and biometric selfies via Amazon Rekognition and third-party liveness detection APIs, cross-reference the identity against watchlists, and guide users proactively when verification steps fail — nudging users to resubmit a clearer document or retry a liveness check, rather than silently dropping them. Genpact’s agentic KYC deployments handle Level 1 reviews entirely autonomously, reserving human review for high-risk or ambiguous cases, which boosts completion rates and reduces onboarding drop-off.

Cybersecurity: behavioral defense at machine speed

The same agentic architecture extends naturally to cybersecurity. Amazon GuardDuty and Amazon Security Hub surface threat findings; agents triage findings, correlate across accounts and regions, and execute automated remediation playbooks via AWS Systems Manager Automation. A compromised credential triggering an unusual API call pattern is investigated, the session revoked, and the incident ticket created — all before a human SOC analyst would have seen the initial alert.

Phase 4: Compliance, Audit & Continuous Improvement

Explainability and audit trails

Every agent action is logged with full provenance: which signals triggered the investigation, which tools were called, what reasoning led to the decision, and what action was taken. These logs flow to Amazon S3 for long-term retention and are queryable via Amazon Athena for regulatory examinations. Amazon QuickSight surfaces real-time fraud analytics dashboards for the fraud management team and generates the periodic reporting required by regulators.

SHAP-based explanations are stored alongside every model prediction, enabling agents to generate human-readable adverse action notices compliant with ECOA and FCRA. The EU AI Act requires conformity assessments for high-risk AI systems in financial services — the audit log architecture above provides the documentation foundation for those assessments.

Closing the retraining loop

Resolved cases — whether cleared, confirmed fraud, or SAR-filed — flow back into the offline feature store in S3. A scheduled SageMaker Pipeline retrains the XGBoost and RCF models on the updated dataset, evaluates performance against holdout data, and promotes the new model to production via SageMaker Model Registry if it clears performance thresholds. This continuous retraining loop is what allows the system to adapt to emerging fraud patterns — including AI-generated synthetic identities and behavioral mimicry attacks — without requiring manual model updates.

Challenges and Safeguards

Adversarial AI and the arms race

The same generative AI capabilities that power fraud detection also power fraud attacks. In 2026, AI-generated synthetic identities pass basic liveness checks; AI-driven fraud mimics the behavioral patterns of legitimate users at scale. The mitigation is continuous adaptation: the retraining loop described above, combined with adversarial training data and ensemble models that combine multiple detection modalities, makes it significantly harder for attackers to tune their behavior to evade any single detector.

Bias and fairness

Fraud models trained on historical data can encode demographic biases — zip code correlations with race, device type correlations with income. Every model deployed in a decision that affects a consumer must undergo disparate impact testing before production promotion. SageMaker Clarify provides bias detection metrics (demographic parity, equalized odds) as part of the evaluation pipeline, and human oversight checkpoints are required for any model that shows disparate impact above threshold.

Adoption guidance

Start with low-risk, high-volume use cases where the cost of a wrong autonomous decision is recoverable — flagging low-value card-not-present transactions is a better starting point than blocking wire transfers. Measure false positive and false negative rates, build trust with compliance and operations teams, then expand the agent’s authority incrementally as performance data accumulates.

Human-in-the-loop design

Autonomous authority should be proportional to risk. The architecture enforces this through Step Functions conditional routing: cases below a dollar threshold and confidence above a calibrated cutoff are resolved autonomously; cases above threshold, involving PEPs, or triggering multiple independent risk signals are automatically escalated to a human review queue integrated with the bank’s case management system via Amazon EventBridge and third-party connectors (Jira, Salesforce, ZenDesk).

Measuring the Impact

The metrics that matter across fraud, AML, and cybersecurity workloads are consistent: reduction in time-to-decision, reduction in false positives, reduction in losses, and analyst hours reclaimed. Stripe Radar’s 38% fraud reduction figure reflects what’s achievable with well-tuned ML at scale. The incremental gain from agentic orchestration is the elimination of human latency in the investigation loop — which is where the largest remaining losses accumulate.

Track these signals to understand where agentic AI provides the most value and where human expertise remains irreplaceable. The goal is not full automation of every decision — it is intelligent allocation of human attention to the cases where it makes the most difference.

Conclusion: Building for the Adaptive Era

The shift from rule-based fraud engines to adaptive agentic intelligence is not a future trend — it is the present competitive baseline for financial institutions operating at scale. The four-phase AWS architecture described here provides a practical path: stream signals into a unified feature store, score them with an ensemble of purpose-built and custom ML models, orchestrate autonomous agents to close investigation loops, and wrap the whole system in the audit and explainability infrastructure regulators require.

The teams that will lead this era are not those that automate the most — they are those that automate intelligently, with clear authority boundaries, continuous bias monitoring, and the discipline to measure outcomes rather than just deploy capabilities.

The adaptive era of financial intelligence has arrived. The question is whether your architecture is ready to meet it. 🚀